ETL Pipelines: How Data Moves from Raw to Insights

Introduction

- Businesses collect raw data from various sources.

- ETL (Extract, Transform, Load) pipelines help convert this raw data into meaningful insights.

- This blog explains ETL processes, tools, and best practices.

1. What is an ETL Pipeline?

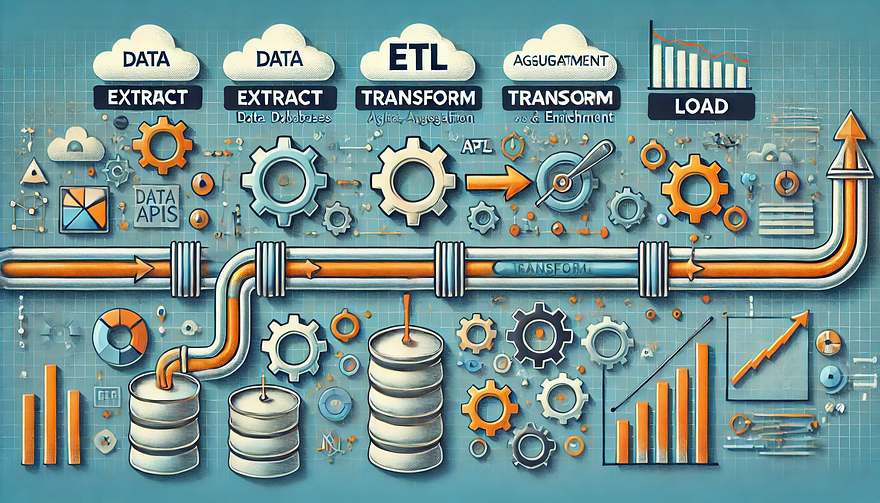

- An ETL pipeline is a process that Extracts, Transforms, and Loads data into a data warehouse or analytics system.

- Helps in cleaning, structuring, and preparing data for decision-making.

1.1 Key Components of ETL

- Extract: Collect data from multiple sources (databases, APIs, logs, files).

- Transform: Clean, enrich, and format the data (filtering, aggregating, converting).

- Load: Store data into a data warehouse, data lake, or analytics platform.

2. Extract: Gathering Raw Data

- Data sources: Databases (MySQL, PostgreSQL), APIs, Logs, CSV files, Cloud storage.

- Extraction methods:

- Full Extraction: Pulls all data at once.

- Incremental Extraction: Extracts only new or updated data.

- Streaming Extraction: Real-time data processing (Kafka, Kinesis).

3. Transform: Cleaning and Enriching Data

- Data Cleaning: Remove duplicates, handle missing values, normalize formats.

- Data Transformation: Apply business logic, merge datasets, convert data types.

- Data Enrichment: Add contextual data (e.g., join customer records with location data).

- Common Tools: Apache Spark, dbt, Pandas, SQL transformations.

4. Load: Storing Processed Data

- Load data into a Data Warehouse (Snowflake, Redshift, BigQuery, Synapse) or a Data Lake (S3, Azure Data Lake, GCS).

- Loading strategies:

- Full Load: Overwrites existing data.

- Incremental Load: Appends new data.

- Batch vs. Streaming Load: Scheduled vs. real-time data ingestion.

5. ETL vs. ELT: What’s the Difference?

- ETL is best for structured data and compliance-focused workflows.

- ELT is ideal for cloud-native analytics, handling massive datasets efficiently.

6. Best Practices for ETL Pipelines

✅ Optimize Performance: Use indexing, partitioning, and parallel processing.

✅ Ensure Data Quality: Implement validation checks and logging.

✅ Automate & Monitor Pipelines: Use orchestration tools (Apache Airflow, AWS Glue, Azure Data Factory).

✅ Secure Data Transfers: Encrypt data in transit and at rest.

✅ Scalability: Choose cloud-based ETL solutions for flexibility.

7. Popular ETL Tools

Conclusion

- ETL pipelines streamline data movement from raw sources to analytics-ready formats.

- Choosing the right ETL/ELT strategy depends on data size, speed, and business needs.

- Automated ETL tools improve efficiency and scalability.

WEBSITE: https://www.ficusoft.in/data-science-course-in-chennai/

Comments

Post a Comment